Why Observability matters.

At Exostiv Labs, we think that ‘Observability’ – or ‘Visibility’ – that is ‘the ability to observe (and understand) a system from its I/Os’ – is relevant – and even key to FPGA debug.

I’d like to show it with a real example taken from the field.

A typical Video processing debugging problem

We had an issue with a FPGA-based video processing system. At some point, the ‘data header’ of a frame of a video stream running at 24 frame per second (24 fps) went wrong. The problem was rather unpredictable and occurred randomly. And -by the way- the system was not our design: when we put our hands on it, improving the design methodology to avoid bugs was not an option anymore.

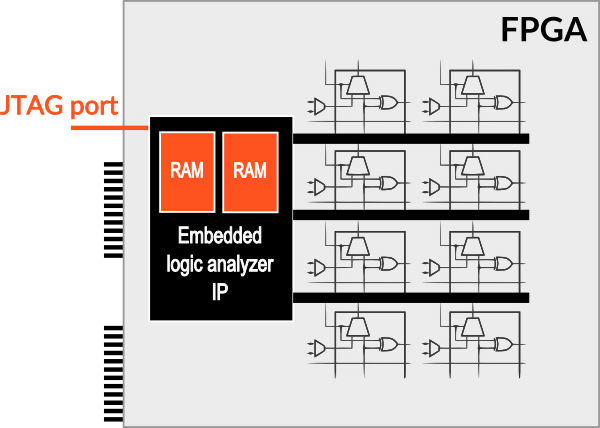

Approach nr 1 : a standard embedded logic analyzer storing the captured debug information in some FPGA memory

Our engineer set up a traditional embedded logic analyzer tool to capture 1.024 bits per frame, taken from the frame header (as the content of the pictures was not very relevant to him). 1kbit per frame was deemed a rather good observability – the engineer hoped to be able to capture and understand the history of events that were creating the bug. All the captured information was stored in the FPGA memory; he was lucky enough to be able to reserve up to 32 kbit of the FPGA memory for debugging purposes.

Using this approach, our engineer has been able to observe the information related to:

32 kbit / 1.024 bit = 32 frames

At 24 fps, this is the equivalent of 1,33 second of the movie

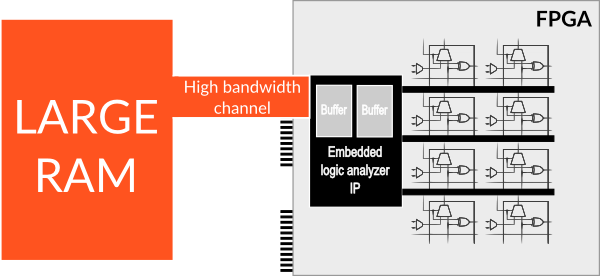

Approach nr 2 : Sending the captured debug information to an external memory

Now, let’s say that we have an external memory as big as 8 GB and sufficient bandwidth to send the debug information ‘on-the-fly’. We’ll check the consequences on the FPGA resources later.

8 Gbyte equals 64 Gigabit of memory.

64 Gbit / 1.024 bit = 64.000.000 frames

64 million frames can be observed with the same ‘accuracy’ (remember that we extract only 1.024 bits from each header).

At 24 fps, this is the equivalent of : [64 M / 24] = 2,66 million second or 2,66 M / 3.600 = 740 hours of the movie !

Usually, a movie is about 2 hours long. So, we’ll be able to ‘see the full movie’ with the same ‘debugging accuracy’.

Actually, with 8 GB external memory and a 2 hours movie, we have a 740 / 2 = 370 ‘scale up factor’. This basically means that we’ll be able to extract not 1.024 bit from each frame, but 1.024 x 370 = 378.880 bits per frame.

Gigabyte range storage eliminated the ‘guess work’

We have just seen that scaling up the total capture size of the debug information has a positive impact on how much of each picture can be seen AND how much of the movie can be observed.

When a bug may occur at any moment during a full 2 hours video stream, wouldn’t you feel more secure if you were certain to have captured the bug AND the history of events that have created it?

What about the required bandwidth?

Up to this point, we have considered a very theoretical example, assuming that we would be able to stream the debug information out to the external 8 GB memory. Actually, we do have this capability – by far.

At 24 fps, extracting 378.880 bits per frame requires a total bandwidth of: 24 x 378.880 = roughly 9,1 Mbps on average.

EXOSTIV provides up to 8GB memory and up to 4 x 12.5 Gbps bandwidth to extract debug information from FPGA.

So…

Stop the guess work during FPGA debug,

Scale up your tools and-

Increase your observation capability.

Thank you for reading

– Frederic